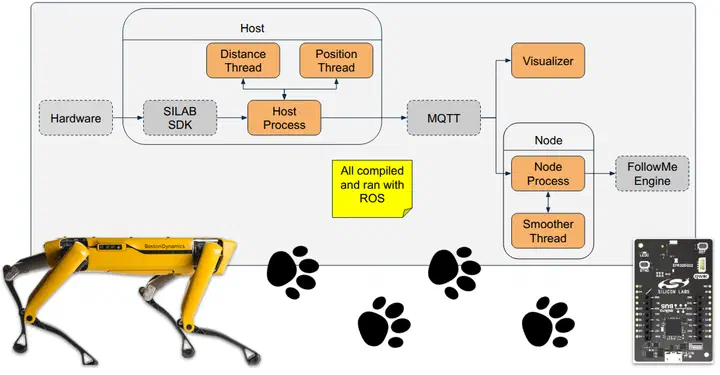

System Architecture

System Architecture

Abstract

Human-following robots have achieved commercial adoption in structured indoor environments such as healthcare facilities, airports, and educational campuses, where environmental conditions are predictable and operational risk is low. However, their deployment in high-risk and unstructured domains including firefighting, search and rescue, and defense operations remains limited. Existing platforms either rely on visual tracking, which struggles when the user leaves the camera field of view or under low-light conditions. Radio-based followers provide reasonable accuracy but suffer from poor resolution when using a single locator, signal interference, and limited applicability in certain environments. To overcome these limitations, we present a system that fuses a depth-camera perception pipeline with a single-antenna Bluetooth Angle-of-Arrival (AoA) direction estimator mounted on a quadruped robot. Our novel visual tracker runs a YOLOv8‑n detector, extracts multi‑modal embeddings (RGB, depth, pose, colored arm‑sleeve histograms) and re‑identifies the operator at 5 Hz on a CPU‑only platform. When visual contact is challenged, the AoA module supplies a heading cue that steers the robot back into view. Experiments were conducted in unknown, unstructured, indoor and outdoor environments. Using a Spot legged robot, we show that the hybrid approach reduces tracking interruptions by 70% compared with vision‑only, while maintaining a lateral position error of 0.54m (RMSE) and a heading error of 0.31rad. The result is a hybrid system capable of following a user in real time across different environments. We call our system extit{CHASER} (Collaborative Helper Autonomous System for Exploration Robots), a wearable-controlled multimodal follower designed for field deployment.